Search Results for cross

Range of Human Performance

Range of Human Performance

Featured Articles

Range of Human Performance

Range of Human Performance

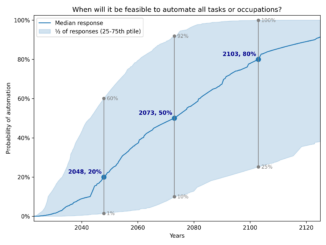

AI Timelines

Range of Human Performance

Reports

Essay Competition on the Automation of Wisdom and Philosophy

Essay Competition on the Automation of Wisdom and Philosophy