By Katja Grace, 28 June 2017

People often wonder what AI researchers think about AI risk. A good collection of quotes can tell us that worry about AI is no longer a fringe view: many big names are concerned. But without a great sense of how many total names are there, how big they are, and what publication biases come between us and them, it has been hard (for me at least) to get a clear view on the distribution of opinion.

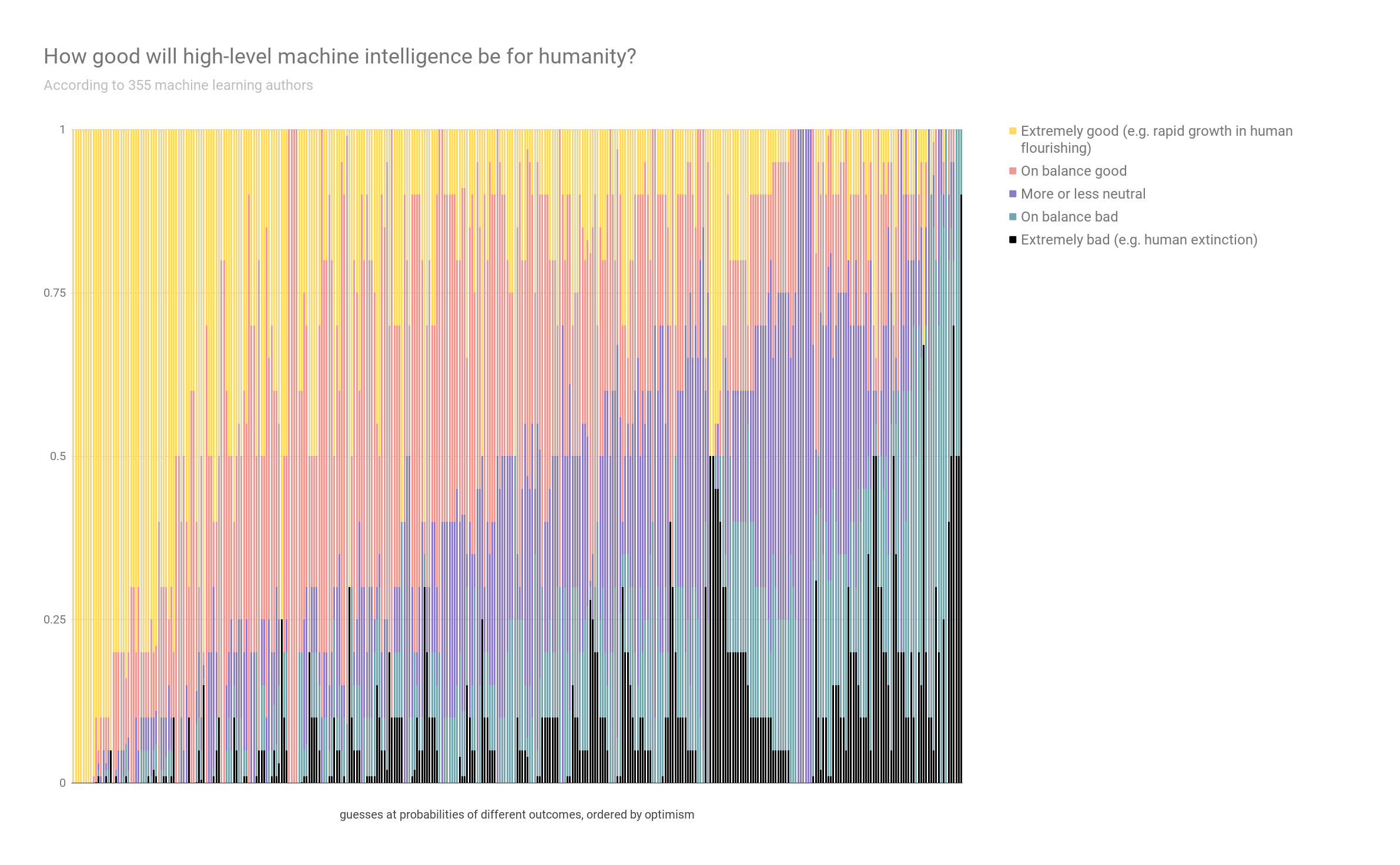

Our survey offers some new evidence on these questions. Here, 355 machine learning researchers weigh in on how good or bad they expect the results of ‘high-level machine intelligence’ to be for humanity:

(Click to expand)

Each column is one person’s opinion of how our chances are divided between outcomes. I put them roughly in order of optimism, to make it intelligible to look at.

If you are wondering how many machine learning researchers didn’t answer, and what their views looked like: nearly four times as many, and we don’t know. But we did try to make it hard for people to decide whether to answer based on their opinions on our questions, by being uninformative in our invitations. I think we went with saying we wanted to ask about ‘progress in the field’ and offering money for responding.

So it was only when people got inside the survey that they would have discovered that we want to know how likely progress in the field is to lead to human extinction, rather than how useful improved datasets are for progress in the field (and actually, we did want to know about that too, and asked—more results to come!). Of the people who got as far as agreeing to take the survey at all, three quarters got as far as this question. So my guess is that this data represents a reasonable slice of machine learning researchers publishing in good venues.

Note that expecting the outcome to be ‘extremely bad’ with high probability doesn’t necessarily indicate support for safety research—for instance, as you may think the situation is hopeless. (We did ask several questions about that too.)

(I’ve been putting up a bunch of survey results; this one struck me as particularly interesting to people not involved in AI forecasting.)

6 Trackbacks / Pingbacks