By Katja Grace, 24 May 2015

People have been predicting when human-level AI will appear for many decades. A few years ago, MIRI made a big, organized collection of such predictions, along with helpful metadata. We are grateful, and just put up a page about this dataset, including some analysis. Some of you saw an earlier version of on an earlier version of our site.

There are lots of interesting things to say about the collected predictions. One interesting thing you might say is ‘wow, the median predictor thinks human-level AI will arrive in the 2030s—that’s kind of alarmingly soon’. While this is true, another interesting thing is that different groups have fairly different predictions. This means the overall median date is especially sensitive to who is in the sample.

In this particular dataset, who is in the sample depends a lot on who bothers to make public predictions. And another interesting fact is that people who bother to make public predictions have shorter AI timelines than people who are surveyed more randomly. This means the predictions you see here are probably biased in the somewhat early direction. We’ll talk about that another time. For now, I’d like to show you some of the interesting differences between groups of people.

We divided the people who made predictions into those in AI, those in AGI, futurists and others. This was a quick and imprecise procedure mostly based on Paul’s knowledge of the fields and the people, and some Googling. Paul doesn’t think he looked at the prediction dates before categorizing, though he probably basically knew some already. For each person in the dataset, we also interpreted their statement as a loose claim about when human-level AI was less likely than not to have arrived and when it was more likely than not to have arrived.

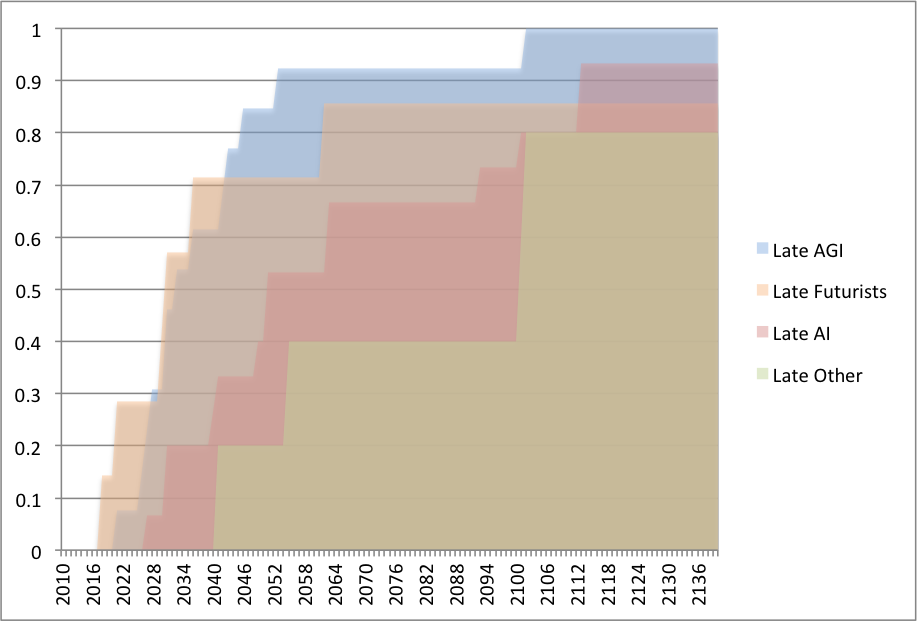

Below is what some of the different groups’ predictions look like, for predictions made since 2000. At each date, the line shows what fraction of predictors in that group think AI will already have happened by then, more likely than not. Note that they may also think AI will have happened before then: statements were not necessarily about the first year on which AI would arrive.

The groups’ predictions look pretty different, and mostly in ways you might expect: futurists and AGI researchers are more optimistic than other AI researchers, who are more optimistic than ‘others’. The median years given by different groups span seventy years, though this is mostly due to ‘other’, which is a small group. Medians for AI and AGI are eighteen years apart.

The ‘futurist’ and ‘other’ categories are twelve people together, and the line between being a futurist and merely pronouncing on the future sometimes seems blurry. It is interesting that the futurists here look very different from the the ‘others’, but I wouldn’t read that much into it. It may just be that Paul’s perception of who is a futurist depends on degree of confidence about futuristic technology.

Most of the predictors are in the AI or AGI categories. These groups have markedly different expectations. About 85% of AGI researchers are more optimistic than the median AI researcher. This is particularly important because ‘expert predictions’ about AI usually come from some combination of AI and AGI researchers, and it looks like what the combination is may alter the median date by around two decades.

Why would AGI researchers be systematically more optimistic than other AI researchers? There are perhaps too many plausible explanations for the discrepancy.

Maybe AGI researchers are—like many—overoptimistic about their own project. Planning fallacy is ubiquitous, and planning fallacy about building AGI naturally shortens overall AGI timelines.

Another possibility is expertise: perhaps human-level AI really will arrive soon, and the AGI researchers are close enough to the action to see this, while it takes time for the information to percolate to others. The AI researchers are also somewhat informed, so their predictions are partway between those of the AGI researchers, and those of the public.

Another reason is selection bias. AI researchers who are more optimistic about AGI will tend to enter the subfield of AGI more often than those who think human-level AI is a long way off. Naturally then, AGI researchers will always be more optimistic about AGI than AI researchers are, even if they are all reasonable and equally well informed. It seems hard to imagine some of the effect not being caused by this.

It matters which explanations are true: expertise means we should listen to AGI researchers above others. Planning fallacy and selection bias suggest we should not listen to them so much, or at least not directly. If we want to listen to them in those cases, we might want to make different adjustments to account for biases.

How can we tell which explanations are true? The shapes of the curves could give some evidence. What would we expect the curves to look like if the different explanations were true? Planning fallacy might look like the entire AI curve being shifted fractionally to the left to produce the AGI curve – e.g. so all of the times are halved. Selection bias would make the AGI curve look like the bottom of the AI curve, or the AI curve with its earlier parts heavily weighted. Expertise could look like dates that everyone in the know just doesn’t predict. Or the predictions might just form a narrower, more accurate, band. In fact all of these would lead to pretty similar looking graphs, and seem to roughly fit the data. So I don’t think we can infer much this way.

Do you favor any of the hypotheses I mentioned? Or others? How do you distinguish between them?

Our page about demographic differences in AI predictions is here.

Our page about the MIRI AI predictions dataset is here.

Be the first to comment