All pages and blog posts

Uncategorized

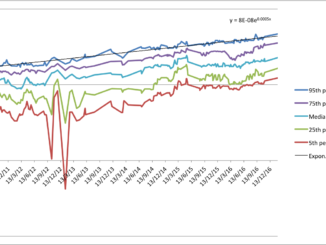

Continuity of progress

AI Timelines

AI Timelines

AI Timelines

Accuracy of AI Predictions

Blog

Blog

AI Timelines

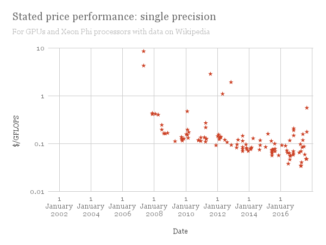

Hardware and AI Timelines